基于自监督学习与数据集分割的后门防御方法

打开文本图片集

关键词:深度学习;后门防御;半监督学习;图像分类;自监督学习

中图分类号:TP309.2 文献标志码:A 文章编号:1001-3695(2026)01-031-0256-07

doi:10.19734/j. issn. 1001-3695.2025.05.0190

Backdoor defense via self-supervised learning and dataset splitting

He Zisheng,Ling Jie (SchoolofComputer ScienceandTechnology,Guangdong UniversityofTechnology,Guangzhou51oo06,China)

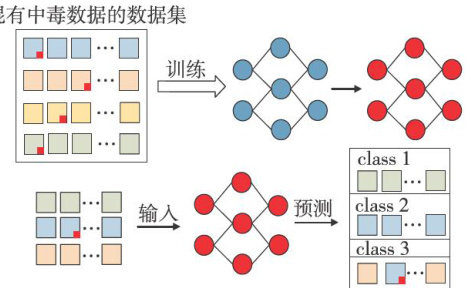

Abstract:Toaddressthevulnerabilityof deep neural networks((DNNs)tobackdoorattacks inimage classificationand the challnge ofbalancing modelacuracyandrobustnessinexistingdefenses,this paper proposedasem-supervisedbackdoordefense methodnamedSAS,hichwasbasedonself-supervised pre-training anddynamicdataset spliting.Themethodfirstlyemployedaself-supervisedtraining phase usingacontrastive learning framework with consistencyregularizationtodecouple mage features from backdorpaterns.Subsequently,the fine-tuning stageutilizedastrategycombining dynamicdataselectioand semi-supervisedlearning.Thisstrategyidentifiedandleveragedhigh-confidenceandlow-confidencedataseparatelyduring trainingto suppress backdoor implantation.Experiments onthe CIFAR-10 and GTSRB datasets against four attacks(BadNets, Blend,WaNet,andRefol)demonstratedthattheproposedmethd,comparedtotheASmethod,mprovedthecasiition accuracy on cleandata byanaverageof1.65andO.65percent points,respectively.Furthermore,itconsistentlyreduced the backdoor attack success rate on poisoned data to below 1.4% . The results confirm that the synergy between feature decoupling anddynamicdataset spliting enables this methodtoefectivelyenhance the model’sbackdoorrobustnesswhile maintaining high performance on cleandata,providing an efective pathway for building secure and reliabledeep learning models.

Key words:deep learning;backdoor defense;semi-supervised learning;image clasification;self-supervised learning

0 引言

据集上训练出不被后门影响的干净模型,已经得到国内外研究者的广泛关注。(剩余17814字)