基于CNN和Transformer架构的多聚焦图像融合研究及应用

打开文本图片集

关键词:多聚焦图像融合;Transformer;多头注意力机制;芯片识别;芯片检测中图分类号:TP391.41 文献标识码:Adoi:10. 37188/OPE.20253322.3577 CSTR:32169.14.OPE.20253322.3577

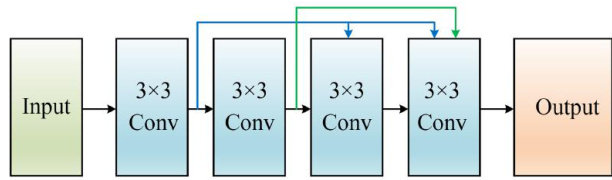

Abstract: To address the limitation that a single focused image could not simultaneously present complete scene information,this paper proposed an end-to-end multi-focus image-fusion algorithm aimed at enhancing fusion accuracy and practicality. A paralll encoder architecture that combined dense convolution and Transformer was constructed to efectively extract both high-frequency and low-frequency features. A spatial-attention mechanism was introduced to further enhance feature representation. In the fusion stage,a semantic-prior-guided cross-fusion strategy was designed to embed high-frequency details under the guidance of low-frequency information. This approach efectively mitigated the bias toward near-focus or far-focus regions seen in traditional methods and significantly improved contrast and detail preservation in the fused image. Compared with recent state-of-the-art methods and seven advanced image fusion algorithms on the Lytro,COCO and MFFW datasets,the proposed method demonstrates significant advantages across multiple metrics,achieving improvements of 2.7% in EN, 13.6% in PSNR, 7.9% in SSIM, 6.5% in MI, 1.6% in AG,and 3.7% in SF. In downstream tasks such as chip pin number recognition and chip center localization,the proposed method also achieves notable performance improvements,verifying its efctiveness and generalizability. The proposed network exhibits excellent performance in both fusion quality and downstream application tasks,meeting the requirements of fast and accurate multi-focus image fusion in practical scenarios.

Key words:multi-focus image fusion; Transformer;multi-head atention mechanism;chip recognition; chip inspection

1引言

多模态图像融合技术1旨在从各种模态中提取互补信息,例如可见光图像中的丰富细节和红外图像中对光照或天气条件具有鲁棒性的热源信息,并将其整合到一张图像中。(剩余21175字)