联合多模态特征与结构感知的手物交互姿态估计

打开文本图片集

关键词:手物姿态估计;图卷积网络;多模态特征;结构感知中图分类号:TP391.41文献标识码:Adoi:10.37188/OPE.20253320.3265 CSTR:32169.14.OPE.20253320.3265

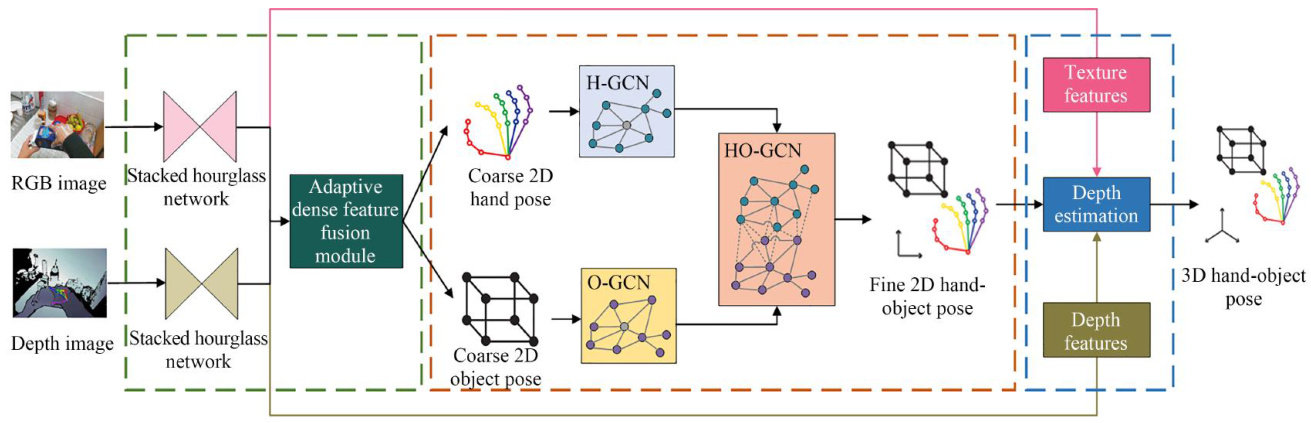

Abstract:In the real world,hands inevitably interact with objects.Understanding the interaction behav iors and intentions between human hands and objects is of great research significance.This paper tackled the low-accuracy pose-estimation issue during hand-object interaction,caused by mutual hand-object occlu sion,hand self-occlusion,and complex backgrounds.A3D pose-estimation method forhands and interacting objects,which combined multi-modal features and structure awareness,was proposed. This method exploited the multi-modal features of color and depth images for information complementarity,effectively addressing complex backgrounds, hand self-occlusion,and hand-object mutual occlusion. Second, graphstructure-based awareness modules for the hand,the object,and their interaction were designed to help es- timate more reasonable and accurate 2D poses.Finally,the obtained 2D poses were merged with depthimage depth information,and texture features were used to optimize the merged 3D poses for the final hand-object interaction 3D pose. To verify the method's effctiveness,experiments were conducted on datasets like FPHA and HO-3D. The hand and object pose errors are reduced to 9.62mm and 14.37mm , respectively. Results show the proposed method outperforms existing ones and has strong robustness and generalization.

Key words: hand-object pose estimation;graph convolutional network;multi-modal features; structure awareness

1引言

在人类日常生活中,手部作为关键的交互工具,其灵活程度和姿态变化多样性是人体其他部位所不能替代的。(剩余26667字)